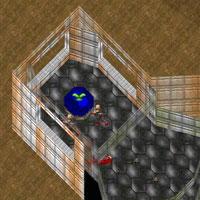

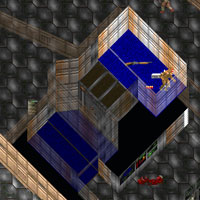

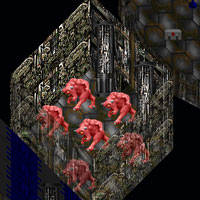

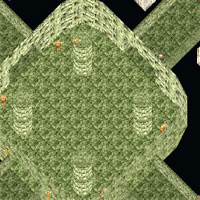

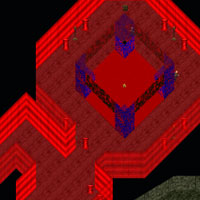

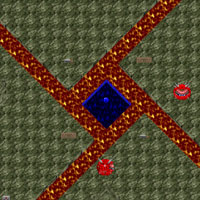

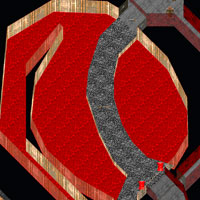

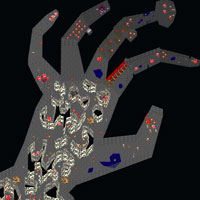

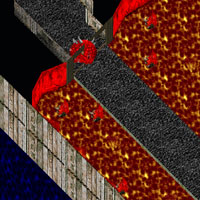

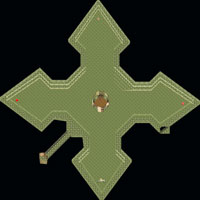

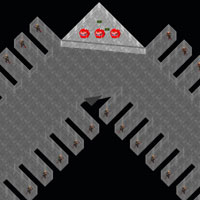

I wrote a custom Java program to parse the Doom WAD file format and render all the levels in a simple oblique projection. All the hidden areas are shown, and the sprites from all the skill levels are shown. I wrote all the 3D code myself, and I’m not exactly stellar in higher math, so these may not be as great looking as they could be. Likewise, I have not settled on the best presentation, so I may regenerate these maps as I find better ways to represent all the information in a clear way. Technical details on these maps at the bottom of the page.

Maps

Technical Details

The Doom WAD format is very well documented. Seeing as any new device with a screen and buttons gets a Doom port written for it, there’s plenty of info about how the game works. In a nutshell, Doom maps are actually 2D blueprints with different floor and ceiling heights. The game is not truly 3D, as there are no floors on top of floors, such as in a two-story house. This makes it very suitable for mapping, since you can see everything at once without overlap when viewed overhead. Even if I could decode other 3D games and render them they would not look very good because there would be no good way to show everything without some areas being obscured by other areas.

My renderer was written from scratch. I might have been able to use some existing libraries out there, but my needs were a bit unique. For one, most 3D rendering is done in perspective. Farther objects are smaller than near objects. But for this project I wanted things “flattened out”, more like an isometric or oblique drafting drawing. Secondly, most 3D programming is intended for immediate screen display, and is thus limited to the screen resolution of your computer. In order to render some of these maps at full 1:1 resolution that would require an impossibly large screen. So I rolled my own simple 3D renderer. It’s not fast, but it has the advantages of doing exactly what I want and is able to output images of almost unlimited size.

Pixel data is written directly to a BMP on disk. While this is slow, it means I can create images larger than my physical memory would otherwise permit. It also means I can recover partial output in case of a crash. Very useful in debugging. For 3D rendering I encapsulate this BMP object inside a Z buffer wrapper. The Z buffer wrapper creates a parallel file of 32-bit floating point numbers to record pixel depth. For small images, both the BMP and Z buffer classes do everything in memory and write the results out at the end (much faster!).

If you don’t know what a Z buffer is, allow me to explain (because I thought it was pretty neat when I first came to understand it). When you’re drawing a 3D scene you will often have two polygons that overlap. The way the computer decides which to draw in front is by using a Z buffer. The Z buffer is a set of depth values, one for each pixel, that tells how “deep” each pixel is. When you draw the first polygon it will draw the pixels and record the depth of each pixel of the polygon in the Z buffer. When you draw the second polygon it will check the Z buffer for each pixel and compare it to the depth of the pixels of the second polygon. If the depth of the new polygon is on top of what’s in the Z buffer then the polygon will be rendered. If it’s below what’s already been drawn then the polygon will not be rendered because it’s obscured in the scene. Except instead of it working on a polygon-by-polygon basis it works on a pixel-by-pixel basis, so you can even render intersecting polygons properly.

(Just to be clear, by depth I mean how far the polygon is from the viewer in the 3D scene. Polygons that are closer to the viewer should be drawn on top of polygons that are farther from the viewer.)

On these maps you’ll notice the walls facing away from the viewer are translucent. Translucent polygons pose a bit of a problem with Z buffering because you no longer have a single depth to test for. If you had two polygons rendered, an opaque one with a translucent one on top, and then rendered a third polygon at a depth in between the first two then what happens? It will either not draw at all because it thinks the translucent polygon will cover it up, or it ignores the translucent polygon and draws right over the top of it. Both choices would produce undesirable results. My solution was to make my renderer queue up such translucent polygons and render them last. I don’t know if this is the “right” answer, but it seems to get the proper result.